Journalist’s Guide to Verifying User-Submitted Photos: Deepfake Detection for Newsrooms

April 11, 2026 · akshay

In an era where AI-generated images can be created in seconds, newsrooms face an unprecedented verification crisis. User-submitted photos—once the backbone of citizen journalism—now require rigorous scrutiny before publication. This guide provides professional verification protocols for journalists, photo editors, and newsroom leaders.

Whether you’re verifying breaking news images from social media or archival photos from contributors, these standards protect your publication’s credibility and legal standing.

The Verification Crisis in Modern Journalism

The proliferation of deepfakes has transformed newsroom workflows. Consider these statistics:

- AI image generation tools create over 20 million images daily

- Deepfake incidents in news contexts increased 900% in 2024-2025

- Legal liability for publishing manipulated images now extends to news organizations

Traditional verification methods—reverse image searches and source checking—remain essential but are no longer sufficient alone.

Pre-Publication Verification Protocol

Step 1: Source Authentication

Before technical analysis, establish provenance:

- Contact the submitter directly via phone or video call

- Request RAW files or original camera exports (not screenshots)

- Verify the submitter was physically present at the claimed location/time

- Check submitter’s history and credibility

Step 2: Technical Screening

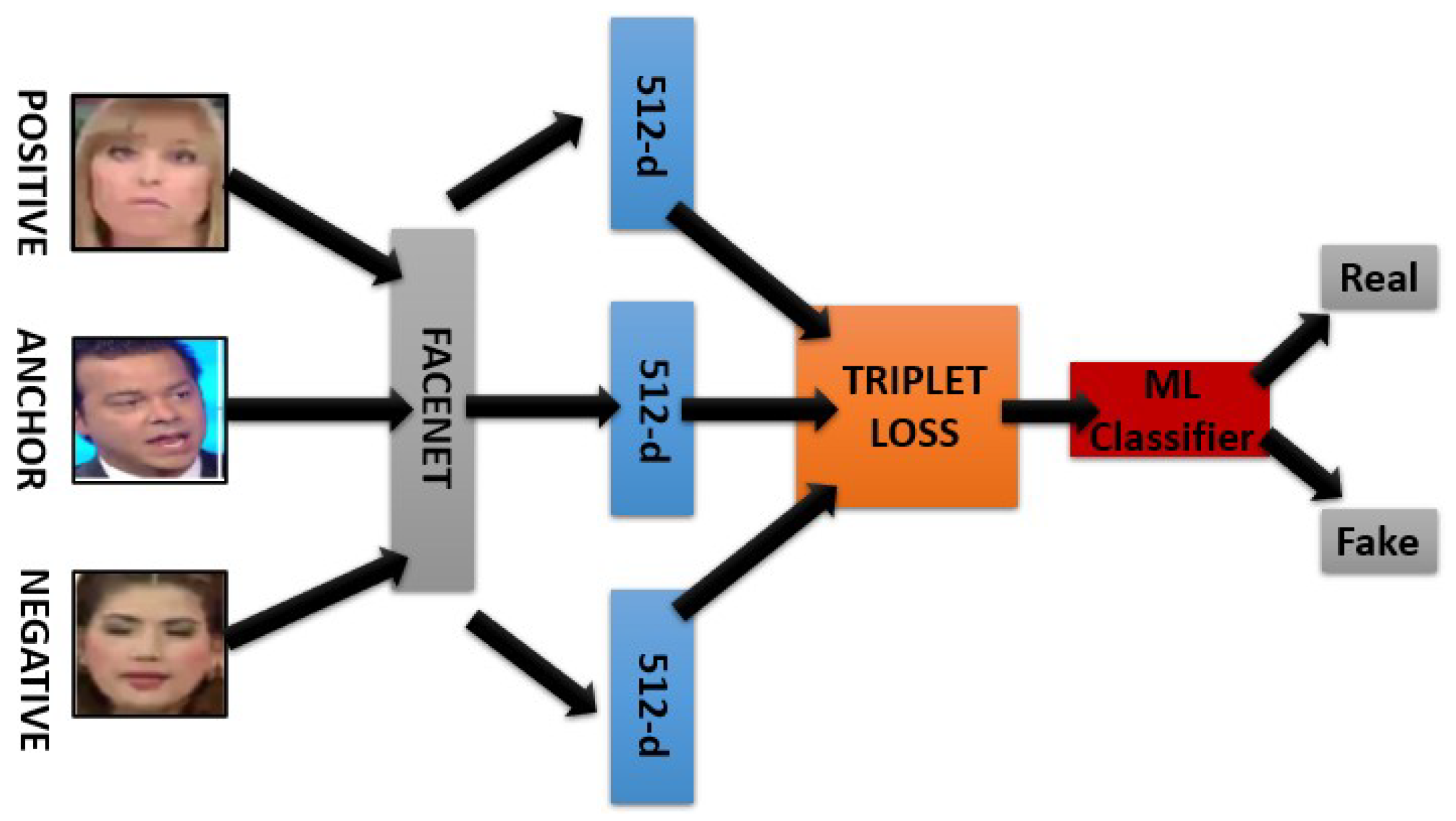

Run automated deepfake detection on all submitted images. Our newsroom screening tool provides:

- Instant risk scoring (0-100 scale)

- Technical analysis of compression patterns

- Metadata examination

- Written documentation for editorial records

Note: Automated screening is your first filter, not your final decision. High scores require escalation.

Step 3: Forensic Analysis (High-Risk Content)

For images showing elevated risk scores or breaking news sensitivity:

- Request Human Review (£149 per image, 7-day turnaround)

- Receive written forensic assessment suitable for legal documentation

- Include analyst credentials for court proceedings if needed

Red Flags: When to Escalate Verification

Immediate escalation triggers for newsroom editors:

- Political content: Images of candidates, protests, or government officials during election cycles

- Crisis events: Natural disasters, conflicts, or accidents where verification affects public safety

- Legal implications: Photos used as evidence or affecting active litigation

- Anonymous sources: Submissions from unverified or first-time contributors

- Viral potential: Images likely to spread rapidly across social platforms

Building Newsroom Verification Standards

Create Verification Tiers

Tier 1 (Automated): All user-submitted photos

Tier 2 (Enhanced): Breaking news + political content

Tier 3 (Forensic): Legal proceedings + high-stakes exclusives

Documentation Requirements

Maintain records for legal protection:

- Screenshots of automated detection results

- Correspondence with image submitters

- Human Review reports for disputed content

- Editorial decision rationale

Legal Considerations for Publishers

Publishing AI-generated images as factual content creates liability risks:

- Defamation: Fake images showing individuals in compromising situations

- Election interference: Politically motivated deepfakes published as news

- Copyright issues: AI training data disputes affecting image rights

Demonstrating “reasonable verification efforts” protects against litigation. Our Human Review service provides documented expert analysis suitable for legal defense.

Implementation Checklist for Newsrooms

Immediate actions to implement today:

- ☐ Add deepfake screening to your submission workflow

- ☐ Train editorial staff on basic AI artifact recognition

- ☐ Establish budget for forensic verification (budget £150-300 per high-stakes image)

- ☐ Update publication guidelines to disclose verification methods

- ☐ Create public correction protocols for mistakenly published AI images

Partner With Verification Experts

Ban Deepfake offers newsroom partnership programs including:

- API access for bulk screening

- Priority Human Review turnaround (48-hour option)

- Staff training sessions on deepfake detection

- Documentation standards for legal compliance

Contact us to discuss enterprise verification solutions tailored to your publication’s volume and requirements.